In this Guide…

A housing chief exec we work with calls TSMs “merely a proxy of we've got to do”. This article argues that tenant satisfaction measures are gameable, that the year-on-year leap is the tell, and that real improvement happens inside customer journeys. TSMs should be an output, not a target.

If you're preparing a board paper on your association’s TSM performance right now, or watching a peer report a double-digit year-on-year leap and wondering what changed, this article is for you.

A few weeks ago, the chief executive of a large North East housing association told their senior leadership team that tenant satisfaction measures are “merely a proxy”.

It was the most honest things I’ve ever heard a housing leader say about TSMs.

The argument is short: tenant satisfaction measures are a regulatory proxy, not a service-improvement target. Treating them as a target both produces gameable scores and starves your organisation of the data its leaders actually need.

TSMs are “Merely a proxy”

The regulator designed TSMs for comparability across the sector, not to run your service-improvement programme. They are deliberately standardised, deliberately blunt, and deliberately the same across every association. That is what makes them useful to a regulator. It is also what makes them limited as an internal management tool.

That sounds obvious until you sit in a board meeting where every paper, every commentary, every benchmark is built around the headline number.

The behaviour this triggers is exactly the behaviour we’d warn you off. I’ve written before about how TSM scores distort housing strategy when they’re treated as the destination rather than a downstream summary, but this post explores this distortion in more detail.

TSMs are gameable

If you wanted to engineer a flattering TSM score without improving the tenant experience, you could. You wouldn’t even need to lie. The score can be heavily influenced by how you measure, when you measure, and who you choose to include

I’m not accusing anyone of doing this. I’m saying that any method that can be gamed will, somewhere, be gamed — and a board comparing itself to peers should know that. The housing sector is not unique in this. Every regulatory metric ever published has produced a set of optimisation behaviours. TSMs are no different.

Big single-year jumps in TSM scores almost always reflect methodology or sampling change, not a step-change in service.

Service quality at scale doesn’t move that fast. A housing association of 25,000 tenants is a vast operational system. Changing repairs cycle times, complaints handling, ASB response and tenancy management all at once, and seeing it land in a tenant population’s lived experience inside 12 months, is genuinely hard.

I’ve seen it done. But it’s rare.

On the other hand, methodology changes can easily move the headline number that fast. To borrow a line from a housing colleague: “From there to there in 12 months stinks a little bit.”

Did the relevant tenant population or sampling frame change? Were any households removed under exceptional circumstances? Obviously any methodology changes must be disclosed, but how many of us look beyond the headline scores?

The same questions apply to your own score. A flattering result is a worse problem than an honest bad one if it isn’t real, because it removes the case for the work that actually needs doing.

What forward-thinking housing leaders measure instead: per-journey transactional friction

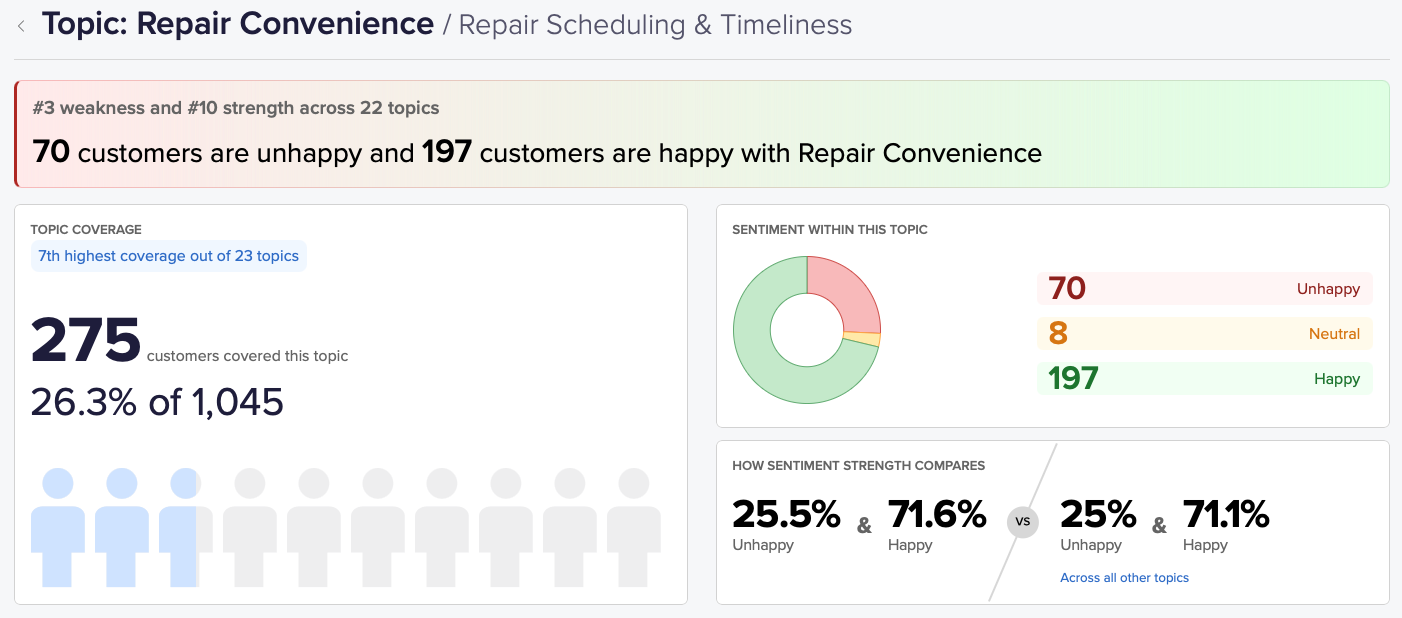

None of this means TSMs are useless. It means they’re the wrong place to point your improvement effort. The leaders we respect most use TSMs as an output of doing the right work, not as a target to optimise toward. The “right work” is per-journey transactional measurement: for every tenant journey, you should know:

- What’s working,

- Where the friction is,

- How big of a problem that friction is.

That third question is the one boards usually can’t answer. Senior leaders already have the hunches. They get the angry letters. The chief exec hears about Mr Stainthorpe — the archetypal tenant who’s “always moaning” — and the customer service team plays it down: “Oh yeah, we know about him.”.

So the chief exec is left guessing whose hunches are correct.

What changes when per-journey data arrives is that leaders can stop guessing. The chief exec I mentioned earlier put it this way:

I had a hunch about these things, but we’d lacked quantification — we’d never had the size of the problem before.

She could be surprised the other way too. One repairs issue she’d assumed was a major problem turned out to be smaller than she thought. The budget could be redirected.

The right action isn’t always the score-moving action

Once you stop chasing TSM as a target, you can finally act on issues that affect a small share of tenants but matter disproportionately — the actions that won’t shift your headline number, but will make you a better landlord.

The chief exec I’ve been quoting did this almost the day the per-journey data landed. They set up a steering group to improve how the organisation updates tenants on anti-social behaviour cases. ASB affects a small percentage of tenants. The action will not meaningfully move the TSM. But, “It’s the right thing to do.”

Boards that only sponsor score-moving actions end up with cleaner dashboards and a worse organisation. The most damning pattern is the one where everyone knows what’s wrong, but no one is allowed to fix the small-population issues because the dashboard doesn’t credit them. The signal of a healthy housing CX function is exactly the opposite: some of the work is visibly not about moving the score, and leadership recognises and praises that.

Treat the proxy as a proxy

Tenant satisfaction measures are a regulatory proxy. The regulator gets value from comparability; your organisation gets value from the per-journey work underneath. Confuse the two and you’ll either game the score or be gamed by your peers — and starve your leaders of the data they actually need to prioritise.

Treat TSMs as an output of doing the right work. Run your improvement programme on per-journey transactional data the regulator doesn’t see. Be honest with your board about what’s moving the score and what isn’t.

Treat the proxy as a proxy. Run your programme on the work underneath.

If you're rebuilding your housing VoC programme around per-journey measurement, using TSMS as an output your organisation is comfortable being judged on, we'd be glad to talk. See how we work with housing associations.