In this Guide…

Your feedback platform has a dashboard. Your dashboard has charts. Your charts show that scores are moving. And nothing has changed. The era of data-as-progress is over

Here's what the new bar looks like, and how to know if you're clearing it.

A mid-to-large housing association ran ten transactional feedback surveys. Repairs. ASB complaints. Asset moves. General customer service. The full picture of how tenants experienced the organisation, captured across every major touchpoint, ticking away for years.

Their collective verdict? “It was rubbish.”

Not because the scores were bad. Because nothing changed. The surveys ran, the data came in, the numbers went up and down, and and at the end of it all, the CX lead told us:

We weren’t getting any insight that led to any change. It was just ticking away in the background.

If that sounds familiar, this article is for you.

The era where having a feedback dashboard counted as progress is over.

Organisations still running programmes that produce charts but not change are running half a CX function at best. Upgrading to a bigger platform won’t fix it, but there is hope…

You’re Not Imagining It.

If your feedback programme has been running for over a year and your service hasn’t materially improved, the instinct is to blame execution… Wrong process. Not enough internal buy-in. Management not acting on what the data shows.

Maybe that’s part of it, but it’s rarely the whole story. In our experience, the platform and the vendor can do real damage that gets ignored because people feel uncomfortable blaming a tool they spent significant budget on.

Tracking a Number Is Not the Same as Understanding It

A score that goes up and down is not insight. It is noise. Most enterprise feedback platforms are selling noise and calling it intelligence.

Moving numbers tell you something is happening. They do not tell you what is driving it, which customers are affected, or where to focus. The housing association had scores tracked across all ten surveys. What they didn’t have was any visibility into what was causing those numbers to move.

They lacked granular insight and prioritisation guidance… They simply had no way to answer the question every CX leader eventually gets asked:

Where do we focus our resources for the biggest impact?

The team cannot act because they don’t know where to act. The board asks “what are we doing about this?” and the honest answer would be: not much. Because the platform gives you a problem without a direction.

The most telling detail from this organisation: zero service recovery. Not slow service recovery. None at all.

They had been collecting feedback from unhappy customers and doing nothing with it. That is worse than not collecting it.

The Bar Has Risen

I hear it directly from CX leaders across the sector: people are tired of paying significant money for a platform that gives them data and some charts. It was progress in 2015. It no longer is. The new (and rightful) expectation is that a feedback programme creates change — not that it documents the current situation.

This means the reader who’s been running a programme for 18 months without seeing results shouldn’t feel foolish for buying what they bought. Vendors are experts at promising that their unicorn-powered platform will change the world, but the reality is that tech doesn’t deliver improvement, action does.

Upgrading to a Bigger Customer Feedback Platform Does Not Solve This Problem

When a VoC platform isn’t delivering, a normal response is to look for something more powerful. More features. More AI. More customisation options.

This is the wrong diagnosis, and Qualtrics and Medallia are built to exploit it. Their pitch, essentially, is: “Your programme isn’t working because you haven’t got enough tech” So they offer you more tech: more survey types, more analytics, more dashboards, more AI.

But our housing association’s problem wasn’t lack of sophistication. They had ten surveys running. They had a dashboard. They had scores. What they had was a team of three people running a feedback programme for a major housing association, with no ability to act on insight even when it was technically present.

As the CX lead put it: they didn’t have the hours in the day to “unpick the data”. More analytics in that situation doesn’t produce more action. It produces more backlog.

The gap is not between collection an understanding. It’s between collection and doing.

You might be thinking: “Our current platform is genuinely too basic, we need more capability.”

But ‘more of the wrong stuff’ is still the wrong stuff.

More analytical features is not the same as more actionability. For a team of two or three running feedback for thousands of customers, the capability that matters is: does the platform make it obvious who needs a response, and does it put that response within reach of the person who can give it?

What a Different Platform Transition looks Like

The housing association didn’t leave their previous platform because it was technically deficient. Their previous provider wasn’t lacking features. What it was lacking was a human.

The specific reasons they switched:

-

No actionable insight. They couldn’t answer the question “where do we focus?” because the platform surfaced numbers, not directions. Nothing in the data pointed clearly to what needed attention or who needed a call.

-

No strategic partner. The support available was technical — logging tickets, resolving platform issues. There was nobody helping them think about whether the programme was working, whether the surveys were asking the right questions, whether the insight was being used effectively. Just a helpdesk number.

-

Response rates that were consistently low, driven by an SMS-heavy channel approach that wasn’t landing with tenants.

What they were explicitly looking for in a new arrangement: “a resource, not just a tool.” They weren’t shopping for better features. They were shopping for a partner who would help them get change, not just data.

The absence of human expertise wasn’t a nice-to-have that was missing. It was central to why the programme had been ticking away in the background for years without producing anything. A platform that generates data but offers no guidance on what to do with it is a reporting tool. They needed a change tool.

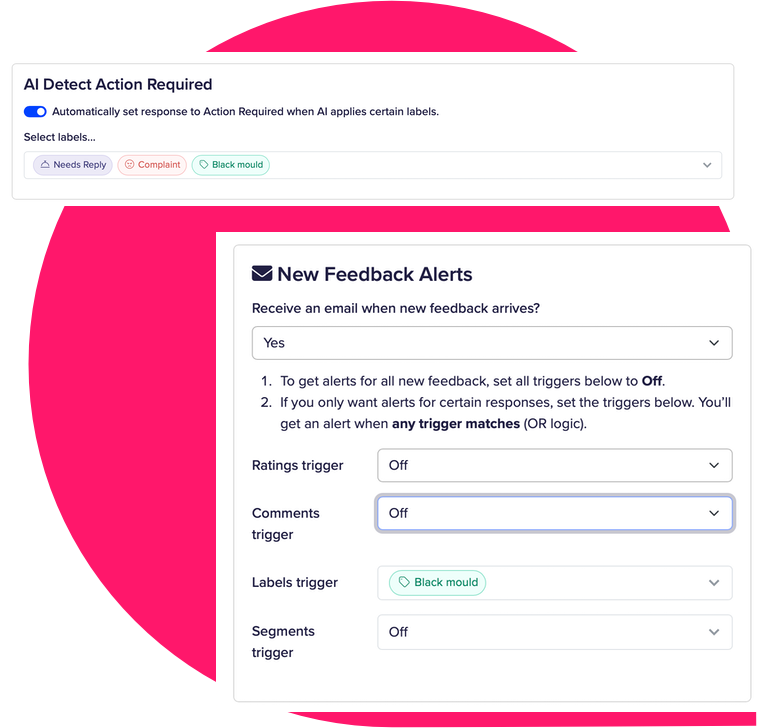

When a tenant mentions damp and mould in their feedback, or mentions that they had to chase three times before anyone responded, the right person should get an alert and act. Not next month, but now.

In CustomerSure, that happens through labels: tags that trigger real-time notifications to the team member who can actually fix the problem.

The CX lead understood it immediately during onboarding: use labels to identify who needs a response, then prioritise those with the biggest issue.

Not more data. More doing.

Does Your Platform Save Time, Reduce Pressure, and Create Change?

Three questions to apply to your current programme right now:

Does the platform surface who needs a response — automatically, without manual review? If your team has to read through feedback to find the customers who need a call, that’s a tax on their time. A good platform does the triage for them.

Do you have a strategic partner helping you get more from the programme, or a helpdesk to log tickets? The difference is whether someone is invested in your outcomes. Technical support keeps the platform running. A strategic partner helps you answer the question “why aren’t our scores improving?” and works with you until they do.

Can you point to a service change in the last six months that came directly from what your feedback told you? If you’re looking at the ROI of your feedback programme honestly… Can you name something different is happening in your organisation because of what customers told you>

If the answer to all three is no: you’re not running a CX programme. You’re running overhead.

The Programme That Ticks — or the Programme That Changes

Collecting data was progress once. Acting on it is progress tiday. The organisations that understand that distinction are running programmes that can show the board a change that happened because of what a customer said. The ones that don’t are watching their scores tick up and down and wondering why nothing is different.

Before you re-sign your current contract, apply the three questions above. Not to justify a switch — to get honest about what you’re actually getting.

If the programme isn’t producing change, the answer is rarely “try harder with what you have.” It’s usually “the platform and the relationship need to be different.”

For the operational reasons why feedback programmes stall even when the data is good — the internal failure modes that compound the vendor ones — read our article on why Voice of the Customer programmes fail even with an enterprise-grade platform.

Ready to move from dashboards to change?

If this resonates, let's talk about what a feedback programme built around action — not just data — looks like for your organisation.