In this Guide…

A team of two or three running feedback for thousands of tenants isn’t unusual in social housing. But most platforms aren't built for that reality. Here’s a model that is.

One housing association we work with had run ten transactional feedback surveys for years — across repairs, complaints, moves, and general customer service. But by the CX lead’s own account, zero service recovery. Because nothing made acting on the feedback feasible for a team of three.

That isn’t unusual. It’s normal for social housing CX teams in the UK right now.

This article is for the CX or customer service operations lead who’s in exactly that position: running a Voice of the Customer programme with a small team, watching the scores go up and down, and wondering whether a ‘real’ programme is actually achievable without more headcount.

The answer is yes. But only if the platform does the routing, triage, and alerting — so your team doesn’t have to touch every response manually. Here’s what closing the loop in practice actually looks like.

Small CX Teams in Social Housing Are the Norm — Not the Exception

If you’re running a social housing feedback programme with two or three people, you’re not unusually resourced compared to your peers. Every housing association our team works with tells the same story: small teams dealing with more regulatory pressure and no budget for new headcount.

At the same time, the obligations have grown. TSM reporting requires housing associations to measure and evidence tenant satisfaction across specific service areas. The Housing Ombudsman is publishing more determinations every year and setting clearer expectations about how quickly complaints should be resolved. Whilst the “collect data and file it” approach of five years ago was flawed, it now no longer satisfies regulators — or tenants.

If you feel like your resourcing problem is unusual, a sign of organisational dysfunction, or something you need to fix before you can run a proper programme… It isn’t, and you don’t. The model that works has to work for a team of two or three, because that’s who’s doing this job.

Collecting Feedback and Acting on Feedback Are Two Different Things

Most small housing CX teams are doing the first and not the second.

Running surveys is not the same as running a feedback programme. A feedback programme produces change. Running surveys produces data. The gap between the two is where most housing associations are stuck.

The housing association I mentioned at the top had used their previous platform for years. The surveys were running, the data was coming in. But when we asked them to describe what had changed as a result, the answer was deflating: “We were just tracking a number.” Scores moved up, scores moved down. Nobody could unpick what was driving the changes. Nobody knew which tenants were most dissatisfied or what they specifically needed. And so, nothing changed.

The honest admission from that organisation’s CX lead made the problem concrete: “If 5% of feedback needs us to respond, at the moment we’re doing 0%.” Not doing service recovery badly — not doing it at all, despite regulatory pressure, despite knowing it was the right thing to do.

This is a structural problem, not a motivation problem. With a small team, manually reading every response, triaging what needs action, and routing it to the right person is simply not feasible at scale. The instinct to add spreadsheets, weekly review meetings and manual escalation logs makes things worse, not better. It adds load to the very team that’s already stretched.

There’s a tempting counter-argument here: “Surely tracking scores tells us enough? We know if things are getting better or worse.” It doesn’t. Scores tell you something changed. They don’t tell you what changed, for which tenants, or where to focus your effort. “We don’t know who’s got the biggest issue” is a direct quote from that same CX team — and it’s the gap that score tracking alone can’t close.

The Fix Isn’t More People

A three-person team can run a serious multi-touchpoint feedback programme if ther platform handles routing, triage, and alerting automatically — leaving the team to act on what matters, rather than reviewing everything.

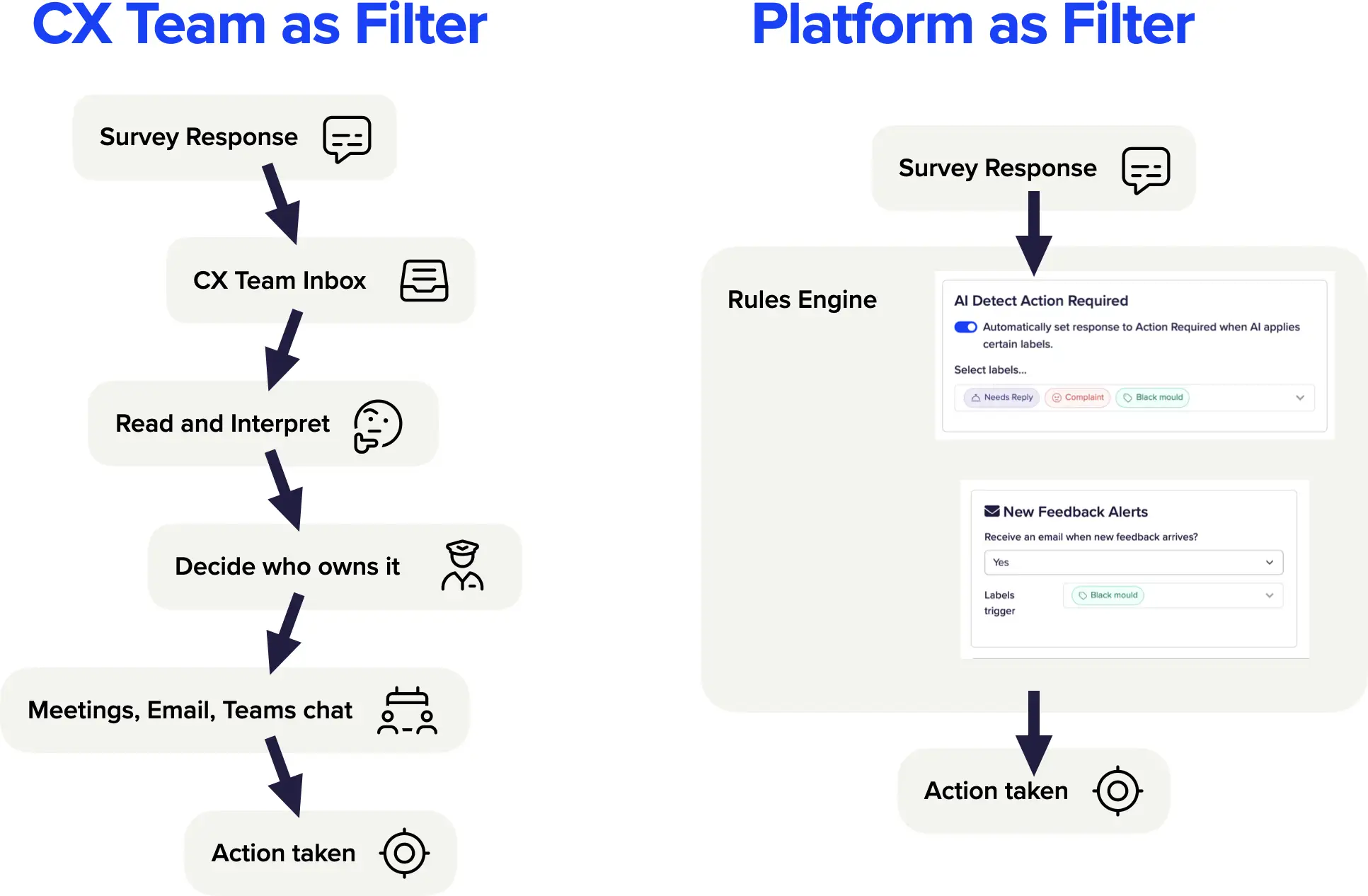

The shift required is conceptual before it’s practical. In a manual process, the CX team is the filter: every response, in some form, passes through them. Someone has to read it, categorise it, decide whether it needs action, and if so, work out who should handle it. That process doesn’t scale to ten surveys and hundreds of responses a month.

In an automated process, the platform is the filter. Responses come in, are analysed in real time, and specific conditions trigger alerts that go directly to the right person. The CX team only sees what genuinely needs their attention.

The CX lead at that housing association articulated the vision precisely: his colleagues do the service recovery and follow-up; he reports scores to the board; the platform handles the routing, notification, and triage:

“I just want it to do its magic without any human intervention.”

That’s not an unrealistic aspiration. Software should get out of the way and help people to do their jobs.

In practice, this means surveys run automatically across service journeys when a job closes or a transaction completes. Responses flow in. The platform identifies what needs attention. Alerts go to the person best placed to act. The CX lead’s dashboard shows trends and scores. Nobody maintains a spreadsheet.

Team size stops being the binding constraint. “We just don’t have the manpower to do this at scale” is a response to a manual process, not an automated one. A team of three can cover ten touchpoints if the platform is surfacing only the responses that genuinely need a human response.

You need Action Triggers, Not Filing Categories

The part of this that surprises people most in practice is what actually makes the routing work.

Topic/theme and sentiment analysis is table-stakes for any CX platform. It’s genuinely useful: it tells you that 23% of repair complaints mention communication failures, or that satisfaction with damp responses has dropped since January. That’s your service improvement data. It feeds trend reports, board packs, quarterly reviews. It helps you get better over time.

But it doesn’t help you recover a situation for an individual tenant this afternoon.

Adding categorisation (In CustomerSure, Labels) on top of the analysis. Not just as another analytical lens, but as a routing mechanism. Each label is tied to an alert rule: when this label is applied to a response, this specific person gets notified immediately, with the tenant’s feedback attached.

A label like “damp and mould” shouldn’t bea reporting theme you review at the end of the month. It’s a trigger: the moment it appears on a response, a named staff member gets an alert. They pick it up. They call the tenant. The loop closes, today.

Across our recent onboardings with housing associations, the bit that gets people excited is the labels conversation. Not the topic analysis, not the dashboards — the point where the client realises “this label means someone gets a call today.”

The labels housing associations start with are usually the obvious ones: damp and mould, hazards, air quality — the categories where Ombudsman risk is attached and a delayed response has direct consequences. But the same housing association we mentioned earlier quickly extended beyond hazard labels to capture process failures: “customer had to chase us multiple times,” “something was fixed and is now broken again. Each one routes a response to the person best placed to investigate and fix it.

This is also the answer to the objection that service recovery at scale is too resource-intensive for a small team. The objection is right if service recovery is manual, but it doesn’t hold up when teams get savvy with automation. When categorisation handles the routing, the CX lead isn’t personally managing every recovery. The work goes directly to the colleague who can actually make the call.

What This Looks Like When It’s Working

A second housing association we work with has labels, triage, alerts, and assignment operating in their service recovery workflow. Alerts route to key staff members who pick them up. Loops close. The programme works.

The question they’re now working through with us isn’t whether service recovery is possible for a team their size, it’s how to get more of the right responses triaged faster. How to use the data to improve the service, not just recover from failures. You can read about similar journeys in the Connect Housing and Beyond Housing case studies.

At this stage in a working programme, you’d expect to see: TSM scores providing a baseline; labels providing the granularity to understand what’s driving them; frontline staff receiving alerts and closing loops without CX team involvement in each case; the CX lead reporting scores and trends to the board rather than managing individual responses.

You shouldn’t be paying for “a platform”

A three-person CX team can’t be experts in survey design, response rate optimisation, TSM reporting best practice, VoC strategy, and label configuration — on top of everything else they do. For these teams, the value of built-in expertise is acute.

Getting a feedback programme to work well requires knowledge that takes time to develop: what questions to ask, how to structure surveys for the best response rates, how to interpret score movements, which labels to configure first and how to write the alert rules. Most small teams don’t have that knowledge on day one, and don’t have the bandwidth to develop it while they’re also running the programme.

The “data and dashboards” era is over. Organisations that ran on their previous platform for years — collecting data, producing charts, watching scores move — have learned that technology alone doesn’t produce closed loop outcomes. What’s replaced it is a different expectation: does this tool and this team save me time, reduce headcount pressure, and create actual change? Not: “does it produce a sophisticated report?”

One CX lead we work with framed it clearly:

“We’re buying a resource, not just a tool.”

CustomerSure includes survey design review, response rate guidance, and strategic input as part of the relationship — not as billable extras. When the programme is set up, we work with the team on what to measure, how to configure it, and how to improve over time.

For a stretched three-person team, that’s the difference between a programme that drives change and one that, as the same lead put it, “just ticks away in the background” — surveys running, scores tracked, nothing changing.

Getting Started

The constraint in social housing CX isn’t team size. It’s whether the platform does the work that a small team can’t do manually: routing, triage, alerting, integration. Get that right and three people can run a programme that genuinely changes things for tenants, for frontline teams, and for the numbers you report to the board and regulator.

If you’re building or rebuilding a housing feedback programme, the questions worth asking of any platform are practical ones: Does it label and route automatically? Can it alert the right person without the CX team in the loop? Does it connect to your systems without manual data handling? Is there expertise behind it, or just software?

See how this works for a team like yours

CustomerSure works with housing associations of all sizes — helping small CX teams run serious feedback programmes without spreadsheets, manual triage, or enterprise overhead. If that’s the problem you’re trying to solve, let’s talk.